Focus and Scope

Biodiversity Data Journal (BDJ) is a community peer-reviewed, open access, comprehensive online platform, designed to accelerate publishing, dissemination and sharing of biodiversity-related data of any kind. All structural elements of the articles – text, morphological descriptions, occurrences, data tables etc. – will be treated and stored as data, in accordance with the Data Publishing Policies and Guidelines of Pensoft Publishers.

The journal will publish papers in biodiversity science containing taxonomic, floristic/faunistic, morphological, genomic, phylogenetic, ecological or environmental data on any taxon of any geological era from any part of the world with no lower or upper limit to manuscript size. For example:

- single taxon treatments and nomenclatural acts (e.g., new taxa, new taxon names, new synonyms, changes in taxonomic status, re-descriptions, etc.);

- data papers describing biodiversity-related databases, including ecological and environmental data;

- sampling reports, local observations or occasional inventories, if these contain novel data;

- local or regional checklists and inventories;

- habitat-based checklists and inventories;

- ecological and biological observations of species and communities;

- any kind of identification keys, from conventional dichotomous to multi-access interactive online keys;

- descriptions of biodiversity-related software tools.

For more information, you may look at the Editorial Beyond dead trees: integrating the scientific process in the Biodiversity Data Journal and press release The Biodiversity Data Journal: Readable by humans and machines.

Globally Unique Innovations

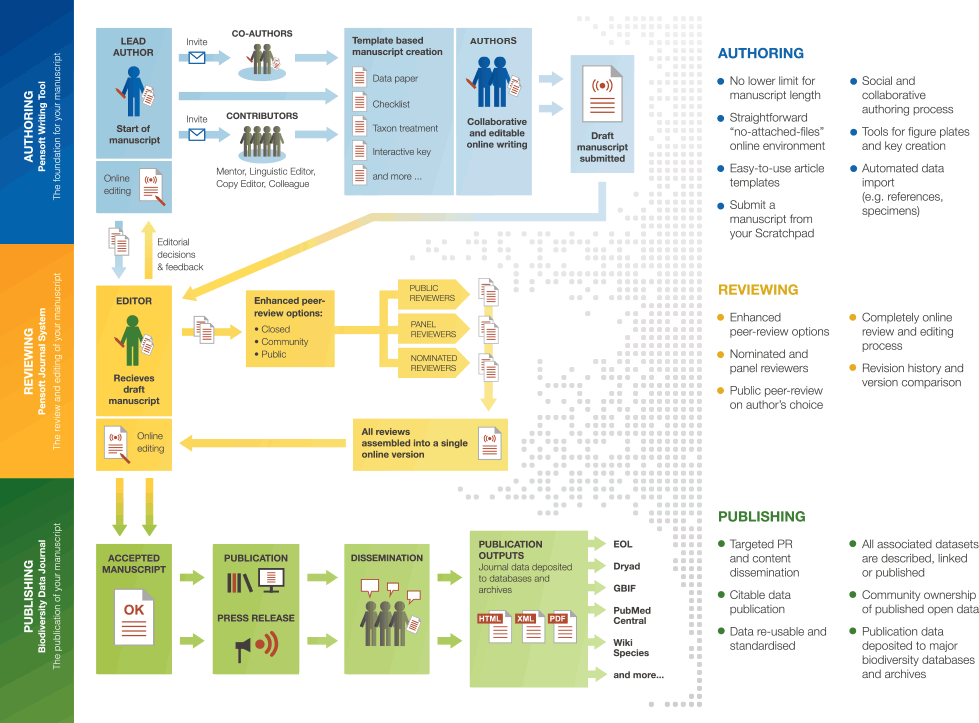

The Biodiversity Data Journal (BDJ) and the associated ARPHA Writing Tool (formerly Pensoft Writing Tool), launched within the FP7 project ViBRANT, created several, globally unique, innovations:

- The first work flow ever to support the full life cycle of a manuscript, from writing through submission, community peer review, publication and dissemination within a single online collaborative platform.

- The online, collaborative, article-authoring platform ARPHA Writing Tool (AWT) provides a large set of pre-defined, but flexible, Biological Codes and Darwin Core compliant, article templates.

- Authors may work collaboratively on a manuscript and invite external contributors, such as mentors, potential reviewers, linguistic and copy editors, colleagues, who may monitor and comment on the text before submission. These comments can be submitted along with the manuscript for editor’s consideration.

- Import/export conversion of data files into text and vice versa, from text to data, such as checklists, catalogues and occurrence data in Darwin Core format, simply at the click of a button.

- Automated import of data-structured manuscripts generated in various platforms (Scratchpads, GBIF Integrated Publishing Toolkit (IPT), authors’ databases).

- A novel community-based pre-publication peer-review and possibilities to comment after publication (post-publication peer-review). Authors may also opt for an entirely public peer-review process. Reviewers may opt to be anonymous or to disclose their names.

For more information, you may look at the Editorial Beyond dead trees: integrating the scientific process in the Biodiversity Data Journal and press release The Biodiversity Data Journal: Readable by humans and machines.

Copyright Notice

License and Copyright Agreement

In submitting the manuscript to any of Pensoft’s journals, authors certify that:

- They are authorized by their co-authors to enter into these arrangements.

- The work described has not been published before (except in the form of an abstract or as part of a published lecture, review or thesis); it is not under consideration for publication elsewhere; its publication has been approved by all author(s) and responsible authorities – tacitly or explicitly – of the institutes where the work has been carried out.

- They secure the right to reproduce any material that has already been published or where copyright is owned by someone else.

- They agree to the following license and copyright agreement:

Copyright

- Copyright on any article is retained by the author(s) or the author's employer. Regarding copyright transfers please see below.

- Authors grant Pensoft Publishers a license to publish the article and identify itself as the original publisher.

- Authors grant any third party the right to use the article freely as long as its original authors and citation details are identified.

- The article and published supplementary material are distributed under the Creative Commons Attribution License (CC BY 4.0):

Creative Commons Attribution License (CC BY 4.0)

Anyone is free:

to Share — to copy, distribute and transmit the work

to Remix — to adapt the work

Under the following conditions:

Attribution. The original authors must be given credit.

- For any reuse or distribution, it must be made clear to others what the license terms of this work are.

- Any of these conditions can be waived if the copyright holders give permission.

- Nothing in this license impairs or restricts the author's moral rights.

The full legal code of this license.

Copyright Transfers

Any usage rights are regulated through the Creative Commons License. Since Pensoft Publishers is using the Creative Commons Attribution License (CC BY 4.0), anyone (the author, their institution/company, the publisher and the public) is free to copy, distribute, transmit and adapt the work as long as the original author is credited (see above). Therefore, specific usage rights cannot be reserved by the author or their institution/company and the publisher cannot include a statement "all rights reserved" in any published paper.

Website design and publishing framework: Copyright © Pensoft Publishers.

CLOCKSS system has permission to ingest, preserve, and serve this Archival Unit.

Authorship/Contributorship

Some journals are integrated with Contributor Role Taxonomy (CRediT), in order to recognise individual author input within a publication, thereby ensuring professional and ethical conduct, while avoiding authorship disputes, gift / ghost authorship and similar pressing issues in academic publishing.

During manuscript submission, the submitting author is strongly recommended to specify a contributor role for each of co-author, i.e. Conceptualization, Methodology, Software, Validation, Formal analysis, Investigation, Resources, Data Curation, Writing - Original draft, Writing - Review and Editing, Visualization, Supervision, Project administration, Funding Acquisition (see more). For the journals that are not integrated with CRediT, the submitting author is encouraged to specify the roles as a free text. Once published the article will include the contributor role for all authors in the article metadata.

Desk Rejection

During the pre-review evaluation, Editors-in-Chief or Subject editors check the manuscript for compliance with the journal's guidelines, focus, and scope. At this point, they may reject a manuscript prior to sending it out for peer review, specifying the reasons. The most common ones are non-conformity with the journal's focus, scope and policies and/or low scientific or linguistic quality. In such cases, authors are encouraged to considerably improve their manuscript and resubmit it for a review. We encourage authors whose manuscripts have been desk rejected due to being out of the scope of this journal to consider another potentially suitable title from the Pensoft portfolio.

In case the manuscript is suitable for the journal but has to be corrected technically or linguistically, it will be returned to the authors for improvement. The authors will not need to re-submit the manuscript but only to upload the corrected file(s) to their existing submission.

Peer Review

This journal uses a single-blind peer review process. This means that the names of reviewers are hidden from the authors (the author does not know the identity of the reviewer, but the reviewer knows the identity of the author). Notwithstanding that, the reviewers are encouraged to disclose their identities, if they wish to do so. Each article is reviewed by at least two independent experts, with a final decision on acceptance being made by the Subject Editor / Editor-in-Chief. Front-matter articles, such as editorials, correspondence, biographies, and similar articles, can be published after editorial evaluation only.

Please consider the Editor and Reviewer Guidelines in the About webpage of this journal for more details and stepwise instructions on the editorial and peer review process.

Preprints

This journal allows posting preprints of the manuscripts submitted for peer-review. Authors are strongly encouraged to use the ARPHA Preprints server for that as an option available during the submission process, which will save a double effort in manuscript submission and allows the preprint to be directly linked to the published article and vice versa.

Manuscripts that contain nomenclatural acts in the sense of the biological Codes will not be posted as preprints even when the authors opt for that, to avoid possible confusion in the priority of names and validity of publication.

Indexing and Archiving

The articles published in the journal are indexed by a high number of industry leading indexers and repositories. The journal content is archived in CLOCKSS, Zenodo, Portico and other international archives. The full list of indexes and archives are shown on the journal homepage.

The authors are allowed to publish preprints of their manuscripts on ARPHA Preprints or other preprint servers. The deposition and distribution of preprints and final article versions is highly encouraged.

Advertising

Publication Ethics and Malpractice Statement

General

The publishing ethics and malpractice policies follow the Principles of Transparency and Best Practice in Scholarly Publishing (joint statement by COPE, DOAJ, WAME, and OASPA), the NISO Recommended Practices for the Presentation and Identification of E-Journals (PIE-J), and, where relevant, the Recommendations for the Conduct, Reporting, Editing, and Publication of Scholarly Work in Medical Journals from ICMJE.

Privacy statement

The personal information used on this website is to be used exclusively for the stated purposes of each particular journal. It will not be made available for any other purpose or to any other party.

Open access

Pensoft and ARPHA-hosted journals adhere strictly to gold open access to accelerate the barrier-free dissemination of scientific knowledge. All published articles are made freely available to read, download, and distribute immediately upon publication, given that the original source and authors are cited (Creative Commons Attribution License (CC BY 4.0)).

Open data publishing and sharing

Pensoft and ARPHA encourage open data publication and sharing, in accordance with Panton’s Principles and FAIR Data Principles. For the domain of biodiversity-related publications Pensoft has specially developed extended Data Publishing Policies and Guidelines for Biodiversity Data. Specific data publishing guidelines are available on the journal website.

Data can be published in various ways, such as preservation in data repositories linked to the respective article or as data files or packages supplementary to the article. Datasets should be deposited in an appropriate, trusted repository and the associated identifier (URL or DOI) of the dataset(s) must be included in the data resources section of the article. Reference(s) to datasets should also be included in the reference list of the article with DOIs (where available). Where no discipline-specific data repository exists authors should deposit their datasets in a general repository such as, for example Zenodo or others.

Submission, peer review and editorial process

The peer review and editorial processes are facilitated through an online editorial system and a set of email notifications. Pensoft journals’ websites display stepwise description of the editorial process and list all necessary instructions and links. These links are also included in the respective email notification.

General: Publication and authorship

- All submitted papers are subject to a rigorous peer review process by at least two international reviewers who are experts in the scientific field of the particular paper.

- The factors that are taken into account in review are relevance, soundness, significance, originality, readability and language.

- A declaration of potential Conflicts of Interest is a mandatory step in the submission process. The declaration becomes part of the article metadata and is displayed in both the PDF and HTML versions of the article.

- The journals allow several rounds of review of a manuscript. The ultimate responsibility for editorial decisions lies with the respective Subject Editor and, in some cases, with the Editor-in-Chief. All appeals should be directed to the Editor-in-Chief, who may decide to seek advice among the Subject Editors and Reviewers.

- The possible decisions include: (1) Accept, (2) Minor revisions, (3) Major revisions, (4) Reject, but re-submission encouraged and (5) Reject.

- If Authors are encouraged to revise and re-submit a submission, there is no guarantee that the revised submission will be accepted.

- The paper acceptance is constrained by such legal requirements as shall then be in force regarding libel, copyright infringement and plagiarism.

- No research can be included in more than one publication.

- Editors-in-Chief, managing editors and their deputies are strongly recommended to limit the amount of papers co-authored by them. As a rule of thumb, research papers (co-)authored by Editors-in-Chief, managing editors and their deputies must not exceed 20% of the publications a year, with a clear task to drop this proportion below 15%. By adopting this practice, the journal is taking extra precaution to avoid endogeny and conflicts of interest, while ensuring the editorial decision-making process remains transparent and fair.

- Editors-in-Chief, managing editors and handling editors are not allowed to handle manuscripts co-authored by them.

Responsibility of Authors

- Authors are required to agree that their paper will be published in open access under the Creative Commons Attribution License (CC BY 4.0) license.

- Authors must certify that their manuscripts are their original work.

- Authors must certify that the manuscript has not previously been published elsewhere.

- Authors must certify that the manuscript is not currently being considered for publication elsewhere.

- Authors should submit the manuscript in linguistically and grammatically correct English and formatted in accordance with the journal’s Author Guidelines.

- Authors must participate in the peer review process.

- Authors are obliged to provide retractions or corrections of mistakes.

- All Authors mentioned are expected to have significantly contributed to the research.

- Authors must notify the Editors of any conflicts of interest.

- Authors must identify all sources used in the creation of their manuscript.

- Authors must report any errors they discover in their published paper to the Editors.

- Authors should acknowledge all significant funders of the research pertaining to their article and list all relevant competing interests.

- Other sources of support for publications should also be clearly identified in the manuscript, usually in an acknowledgement (e.g. funding for the article processing charge; language editing or editorial assistance).

- The corresponding author should provide the declaration of any conflicts of interest on behalf of all authors. Conflicts of interest may be associated with employment, sources of funding, personal financial interests, membership of relevant organisations or others.

- Manuscripts in revision have to be revised and resubmitted within a reasonable time span. The authors are aware that manuscripts not revised within 100 days after the revision decision will be rejected and have, if desired by the authors, to be submitted afresh.

Responsibility of Reviewers

- The manuscripts will be reviewed by two or three experts in order to reach first decision as soon as possible. Reviewers do not need to sign their reports but are welcome to do so. They are also asked to declare any conflicts of interests.

- Reviewers are not expected to provide a thorough linguistic editing or copyediting of a manuscript, but to focus on its scientific quality, as well as for the overall style, which should correspond to the good practices in clear and concise academic writing. If Reviewers recognize that a manuscript requires linguistic edits, they should inform both Authors and Editor in the report.

- Reviewers are asked to check whether the manuscript is scientifically sound and coherent, how interesting it is and whether the quality of the writing is acceptable.

- In cases of strong disagreement between the reviews or between the Authors and Reviewers, the Editors can judge these according to their expertise or seek advice from a member of the journal's Editorial Board.

- Reviewers are also asked to indicate which articles they consider to be especially interesting or significant. These articles may be given greater prominence and greater external publicity, including press releases addressed to science journalists and mass media.

- During a second review round, the Reviewer may be asked by the Subject Editor to evaluate the revised version of the manuscript with regards to Reviewer’s recommendations submitted during the first review round.

- Reviewers are asked to be polite and constructive in their reports. Reports that may be insulting or uninformative will be rescinded.

- Reviewers are asked to start their report with a very brief summary of the reviewed paper. This will help the Editors and Authors see whether the reviewer correctly understood the paper or whether a report might be based on misunderstanding.

- Further, Reviewers are asked to comment on originality, structure and previous research: (1) Is the paper sufficiently novel and does it contribute to a better understanding of the topic under scrutiny? Is the work rather confirmatory and repetitive? (2) Is the introduction clear and concise? Does it place the work into the context that is necessary for a reader to comprehend the aims, hypotheses tested, experimental design or methods? Are Material and Methods clearly described and sufficiently explained? Are reasons given when choosing one method over another one from a set of comparable methods? Are the results clearly but concisely described? Do they relate to the topic outlined in the introduction? Do they follow a logical sequence? Does the discussion place the paper in scientific context and go a step beyond the current scientific knowledge on the basis of the results? Are competing hypotheses or theories reasonably related to each other and properly discussed? Do conclusions seem reasonable? Is previous research adequately incorporated into the paper? Are references complete, necessary and accurate? Is there any sign that substantial parts of the paper were copies of other works?

- Reviewers should not review manuscripts in which they have conflicts of interest resulting from competitive, collaborative, or other relationships or connections with any of the authors, companies, or institutions connected to the papers.

- Reviewers should keep all information regarding papers confidential and treat them as privileged information.

- Reviewers should express their views clearly with supporting arguments.

- Reviewers should identify relevant published work that has not been cited by the authors.

- Reviewers should also call to the Editors’ attention any substantial similarity or overlap between the manuscript under consideration and any other published paper of which they have personal knowledge.

Responsibility of Editors

- Editors in Pensoft’s journals carry the main responsibility for the scientific quality of the published papers and base their decisions solely on the papers' importance, originality, clarity and relevance to publication's scope.

- The Subject Editor takes the final decision on a manuscript’s acceptance or rejection and his/her name is listed as "Academic Editor" in the header of each article.

- The Subject Editors are not expected to provide a thorough linguistic editing or copyediting of a manuscript, but to focus on its scientific quality, as well as the overall style, which should correspond to the good practices in clear and concise academic writing.

- Editors are expected to spot small errors in orthography or stylistic during the editing process and correct them.

- Editors should always consider the needs of the Authors and the Readers when attempting to improve the publication.

- Editors should guarantee the quality of the papers and the integrity of the academic record.

- Editors should preserve the anonymity of Reviewers, unless the latter decide to disclose their identities.

- Editors should ensure that all research material they publish conforms to internationally accepted ethical guidelines.

- Editors should act if they suspect misconduct and make all reasonable attempts to obtain a resolution to the problem.

- Editors should not reject papers based on suspicions, they should have proof of misconduct.

- Editors should not allow any conflicts of interest between Authors, Reviewers and Board Members.

- Editors are allowed to publish a limited proportion of papers per year co-authored by them, after considering some extra precautions to avoid an impression of impropriety, endogeny, conflicts of interest and ensure that the editorial decision-making process is transparent and fair.

- Editors-in-Chief, managing editors and handling editors are not allowed to handle manuscripts co-authored by them.

Neutrality to geopolitical disputes

General

The strict policy of Pensoft and its journals is to stay neutral to any political or territorial dispute. Authors should depoliticize their studies by avoiding provoking remarks, disputable geopolitical statements and controversial map designations; disputable territories should be referred to as well-recognised and non-controversial geographical areas. Тhe journal reserves the right to mark such areas at least as disputable at or after publication, to publish editor's notes, or to reject/retract the paper.

Authors' affiliations

Pensoft does not take decisions regarding the actual affiliations of institutions. Authors are advised to provide their affiliation as indicated on the official internet site of their institution.

Editors

Editorial decisions should not be affected by the origins of the manuscript, including the nationality, ethnicity, political beliefs, race, or religion of the authors. Decisions to edit and publish should not be determined by the policies of governments or other agencies outside of the journal itself.

Human and animal rights

The ethical standards in medical and pharmacological studies are based on the Helsinki declaration (1964, amended in 1975, 1983, 1989, 1996, 2000 and 2013) of the World Medical Association and the Publication Ethics Policies for Medical Journals of the World Association of Medical Journals (WAME).

Authors of studies including experiments on humans or human tissues should declare in their cover letter a compliance with the ethical standards of the respective institutional or regional committee on human experimentation and attach committee’s statement and informed consent; for those researchers who do not have access to formal ethics review committees, the principles outlined in the Declaration of Helsinki should be followed and declared in the cover letter. Patients’ names, initials, or hospital numbers should not be used, not in the text nor in any illustrative material, tables of databases, unless the author presents a written permission from each patient to use his or her personal data. Photos or videos of patients should be taken after a warning and agreement of the patient or of a legal authority acting on his or her behalf.

Animal experiments require full compliance with local, national, ethical, and regulatory principles, and local licensing arrangements and respective statements of compliance (or approvals of institutional ethical committees where such exists) should be included in the article text.

Informed consent

Individual participants in studies have the right to decide what happens to the identifiable personal data gathered, to what they have said during a study or an interview, as well as to any photograph that was taken. Hence it is important that all participants gave their informed consent in writing prior to inclusion in the study. Identifying details (names, dates of birth, identity numbers and other information) of the participants that were studied should not be published in written descriptions, photographs, and genetic profiles unless the information is essential for scientific purposes and the participant (or parent or guardian if the participant is incapable) gave written informed consent for publication. Complete anonymity is difficult to achieve in some cases, and informed consent should be obtained if there is any doubt. If identifying characteristics are altered to protect anonymity, such as in genetic profiles, authors should provide assurance that alterations do not distort scientific meaning.

The following statement should be included in the article text in one of the following ways:

- "Informed consent was obtained from all individual participants included in the study."

- "Informed consent was obtained from all individuals for whom identifying information is included in this article." (In case some patients’ data have been published in the article or supplementary materials to it).

Gender issues

We encourage the use of gender-neutral language, such as 'chairperson' instead of 'chairman' or 'chairwomen', as well as 'they' instead of 'she/he' and 'their' instead of 'him/her' (or consider restructuring the sentence).

Conflict of interest

During the editorial process, the following relationships between editors and authors are considered conflicts of interest: Colleagues currently working in the same research group or department, recent co-authors, and doctoral students for which the editor served as committee chair. During the submission process, the authors are kindly advised to identify possible conflicts of interest with the journal editors. After manuscripts are assigned to the handling editor, individual editors are required to inform the managing editor of any possible conflicts of interest with the authors. Journal submissions are also assigned to referees to minimize conflicts of interest. After manuscripts are assigned for review, referees are asked to inform the editor of any conflicts that may exist.

Appeals and open debate

We encourage academic debate and constructive criticism. Authors are always invited to respond to any editorial correspondence before publication. Authors are not allowed to neglect unfavorable comments about their work and choose not to respond to criticisms.

No Reviewer’s comment or published correspondence may contain a personal attack on any of the Authors. Criticism of the work is encouraged. Editors should edit (or reject) personal or offensive statements. Authors should submit their appeal on editorial decisions to the Editorial Office, addressed to the Editor-in-Chief or to the Managing Editor. Authors are discouraged from directly contacting Editorial Board Members and Editors with appeals.

Editors will mediate all discussions between Authors and Reviewers during the peer review process prior to publication. If agreement cannot be reached, Editors may consider inviting additional reviewers if appropriate.

The Editor-in-Chief will mediate all discussions between Authors and Subject Editors.

The journals encourage publication of open opinions, forum papers, corrigenda, critical comments on a published paper and Author’s response to criticism.

Misconduct

Research misconduct may include: (a) manipulating research materials, equipment or processes; (b) changing or omitting data or results such that the research is not accurately represented in the article; c) plagiarism. Research misconduct does not include honest error or differences of opinion. If misconduct is suspected, journal Editors will act in accordance with the relevant COPE guidelines.

Plagiarism and duplicate publication policy

A special case of misconduct is plagiarism, which is the appropriation of another person's ideas, processes, results or words without giving appropriate credit. Plagiarism is considered theft of intellectual property and manuscripts submitted to this journal which contain substantial unattributed textual copying from other papers will be immediately rejected. Editors are advised to check manuscripts for plagiarism via the iThenticate service by clicking on the "ïThenticate report" button. Journal providing a peer review in languages other than English (for example, Russian) may use other plagiarism checking services (for example, Antiplagiat).

Instances, when authors re-use large parts of their publications without providing a clear reference to the original source, are considered duplication of work. Slightly changed published works submitted in multiple journals is not acceptable practice either. In cases of plagiarism in an already published paper or duplicate publication, an announcement will be made on the journal publication page and a procedure of retraction will be triggered.

Responses to possible misconduct

All allegations of misconduct must be referred to the Editor-In-Chief. Upon the thorough examination, the Editor-In-Chief and deputy editors should conclude if the case concerns a possibility of misconduct. All allegations should be kept confidential and references to the matter in writing should be kept anonymous, whenever possible.

Should a comment on potential misconduct be submitted by the Reviewers or Editors, an explanation will be sought from the Authors. If it is satisfactory and the issue is the result of either a mistake or misunderstanding, the matter can be easily resolved. If not, the manuscript will be rejected or retracted and the Editors may impose a ban on that individual's publication in the journals for a certain period of time. In cases of published plagiarism or dual publication, an announcement will be made in both journals explaining the situation.

When allegations concern authors, the peer review and publication process for their submission will be halted until completion of the aforementioned process. The investigation will be carried out even if the authors withdraw the manuscript, and implementation of the responses below will be considered.

When allegations concern reviewers or editors, they will be replaced in the review process during the ongoing investigation of the matter. Editors or reviewers who are found to have engaged in scientific misconduct should be removed from further association with the journal, and this fact reported to their institution.

Retraction policies

Article retraction

According to the COPE Retraction Guidelines followed by this Journal, an article can be retracted because of the following reasons:

- Unreliable findings based on clear evidence of a misconduct (e.g. fraudulent use of the data) or honest error (e.g. miscalculation or experimental error).

- Redundant publication, e.g., findings that have previously been published elsewhere without proper cross-referencing, permission or justification.

- Plagiarism or other kind of unethical research.

Retraction procedure

- Retraction should happen after a careful consideration by the Journal editors of allegations coming from the editors, authors, or readers.

- The HTML version of the retracted article is removed (except for the article metadata) and on its place a retraction note is issued.

- The PDF of the retracted article is left on the website but clearly watermarked with the note "Retracted" on each page.

- In some rare cases (e.g., for legal reasons or health risk) the retracted article can be replaced with a new corrected version containing apparent link to the retracted original version and a retraction note with a history of the document.

Expression of concern

In other cases, the Journal editors should consider issuing an expression of concern, if evidence is available for:

- Inconclusive evidence of research or publication misconduct by the authors.

- Unreliable findings that are unreliable but the authors’ institution will not investigate the case.

- A belief that an investigation into alleged misconduct related to the publication either has not been, or would not be, fair and impartial or conclusive.

- An investigation is underway but a judgement will not be available for a considerable time.

Errata and Corrigenda

Pensoft journals largely follow the ICMJE guidelines for corrections and errata.

Errata

Admissible and insignificant errors in a published article that do not affect the article content or scientific integrity (e.g. typographic errors, broken links, wrong page numbers in the article headers etc.) can be corrected through publishing of an erratum. This happens through replacing the original PDF with the corrected one together with a correction notice on the Erratum Tab of the HTML version of the paper, detailing the errors and the changes implemented in the original PDF. The original PDF will be marked with a correction note and an indication to the corrected version of the erratum article. The original PDF will also be archived and made accessible via a link in the same Erratum Tab.

Authors are also encouraged to post comments and indicate typographical errors on their articles to the Comments tab of the HTML version of the article.

Corrigenda

Corrigenda should be published in cases when significant errors are discovered in a published article. Usually, such errors affect the scientific integrity of the paper and could vary in scale. Reasons for publishing corrigenda may include changes in authorship, unintentional mistakes in published research findings and protocols, errors in labelling of tables and figures or others. In taxonomic journals, corrigenda are often needed in cases where the errors affect nomenclatural acts. Corrigenda are published as a separate publication and bear their own DOI. Examples of published corrigenda are available here.

The decision for issuing errata or corrigenda is with the editors after discussion with the authors.

COPE Compliance

Terms of Use

This document describes the Terms of Use of the services provided by the Biodiversity Data Journal journal, hereinafter referred to as "the Journal" or "this Journal". All Users agree to these Terms of Use when signing up to this Journal. Signed Journal Users will be hereinafter referred to as "User" or "Users".

The publication services to the Journal are provided by Pensoft Publishers Ltd., through its publishing platform ARPHA, hereinafter referred to as "the Provider".

The Provider reserves the right to update the Terms of Use occasionally. Users will be notified via posting on the site and/or by email. If using the services of the Journal after such notice, the User will be deemed to have accepted the proposed modifications. If the User disagrees with the modifications, he/she should stop using the Journal services. Users are advised to periodically check the Terms of Use for updates or revisions. Violation of any of the terms will result in the termination of the User's account. The Provider is not responsible for any content posted by the User in the Journal.

Account Terms

- For registration in this Journal or any of the services or tools hosted on it, Users must provide their full legal name, a valid email address, postal address, affiliation (if any), and any other information requested.

- Accounts created via this journal automatically sign in the User to the ARPHA Platform.

- Users are responsible for maintaining the security of their account and password. The Journal cannot and will not be liable for any loss or damage from failure to comply with this security obligation.

- Users are solely responsible for the content posted via the Journal services (including, but not limited to data, text, files, information, usernames, images, graphics, photos, profiles, audio and video clips, sounds, applications, links and other content) and all activities that occur under their account.

- Users may not use the service for any illegal or unauthorised purpose. Users must not, in the use of the service, violate any laws within their jurisdiction (including but not limited to copyright or trademark laws).

- Users can change or pseudonomyse their personal data, or deactivate their accounts at any time through the functionality available in the User’s personal profile. Deactivation or pseudonomysation will not affect the appearance of personal data in association with an already published work of which the User is author, co-author, editor, or reviewer.

- Users can report to the Journal uses of their personal data, that they might consider not corresponding to the current Terms of Use.

- The User’s personal data is processed by the Journal on the legal basis corresponding to Article 6, paragraph 1, letters a, b, c and f. of the General Data Protection Regulation (hereinafter referred to as GDPR) and will be used for the purpose of Journal’s services in accordance with the present Terms and Use, as well as in those cases expressly stated by the legislation.

- User’s consent to use the information the Journal has collected about the User corresponds to Article 6(1)(a) of the GDPR.

- The ‘legitimate interest’ of the Journal to engage with the User and enable him/her to participate in Journal’s activities and use Journal’s services correspond to Article 6(1)(f) of the GDPR.

Services and Prices

The Provider reserves the right to modify or discontinue, temporarily or permanently, the services provided by the Journal. Plans and prices are subject to change upon 30 days notice from the Provider. Such notice may be provided at any time by posting the changes to the relevant service website.

Ownership

The Authors retain full ownership to their content published in the Journal. We claim no intellectual property rights over the material provided by any User in this Journal. However, by setting pages to be viewed publicly (Open Access), the User agrees to allow others to view and download the relevant content. In addition, Open Access articles might be used by the Provider, or any other third party, for data mining purposes. Authors are solely responsible for the content submitted to the journal and must confirm [during the submission process] that the content does not contain any materials subject to copyright violation including, but not limited to, text, data, multimedia, images, graphics, photos, audio and video clips. This requirement holds for both the article text and any supplementary material associated with the article.

The Provider reserves the rights in its sole discretion to refuse or remove any content that is available via the Website.

Copyrighted Materials

Unless stated otherwise, the Journal website may contain some copyrighted material (for example, logos and other proprietary information, including, without limitation, text, software, photos, video, graphics, music and sound - "Copyrighted Material"). The User may not copy, modify, alter, publish, transmit, distribute, display, participate in the transfer or sale, create derivative works or, in any way, exploit any of the Copyrighted Material, in whole or in part, without written permission from the copyright owner. Users will be solely liable for any damage resulting from any infringement of copyrights, proprietary rights or any other harm resulting from such a submission.

Exceptions from this rule are e-chapters or e-articles published under Open Access (see below), which are normally published under Creative Commons Attribution 3.0 license (CC-BY), or Creative Commons Attribution 4.0 license (CC-BY), or Creative Commons Public Domain license (CC0).

Open Access Materials

This Journal is a supporter of open science. Open access to content is clearly marked, with text and/or the open access logo, on all materials published under this model. Unless otherwise stated, open access content is published in accordance with the Creative Commons Attribution 4.0 licence (CC-BY). This particular licence allows the copying, displaying and distribution of the content at no charge, provided that the author and source are credited.

Privacy Statement

- Users agree to submit their personal data to this Journal, hosted on the ARPHA Platform provided by Pensoft.

- The Journal collects personal information from Users (e.g., name, postal and email addresses, affiliation) only for the purpose of its services.

- All personal data will be used exclusively for the stated purposes of the website and will not be made available for any other purpose or to third parties.

- In the case of co-authorship of a work published through the Journal services, each of the co-authors states that they agree that their personal data be collected, stored and used by the Journal.

- In the case of co-authorship, each of the co-authors agrees that their personal data publicly available in the form of a co-authorship of a published work, can be distributed to external indexing services and aggregators for the purpose of the widest possible distribution of the work they co-author.

- When one of the co-authors is not registered in the Journal, it is presumed that the corresponding author who is registered has requested and obtained his/her consent that his/her personal data will be collected, stored and used by the Journal.

- The registered co-author undertakes to provide an e-mail address of the unregistered author, to whom the Journal will send a message in order to give the unregistered co-author’s explicit consent for the processing of his/her personal data by the Journal.

- The Journal is not responsible if the provided e-mail of the unregistered co-author is inaccurate or invalid. In such cases, it is assumed that the processing of the personal data of the unregistered co-author is done on a legal basis and with a given consent.

- The Journal undertakes to collect, store and use the provided personal data of third parties (including but not limited to unregistered co-authors) solely for the purposes of the website, as well as in those cases expressly stated by the legislation.

- Users can receive emails from Journal and its hosting platform ARPHA, provided by Pensoft, about activities they have given their consent for. Examples of such activities are:

- Email notifications to authors, reviewers and editors who are engaged with authoring, reviewing or editing a manuscript submitted to the Journal.

- Email alerts sent via email subscription service, which can happen only if the User has willingly subscribed for such a service. Unsubscription from the service can happen through a one-click link provided in each email alert notification.

- Information emails on important changes in the system or in its Terms of Use which are sent via Mailchimp are provided with "Unsubscribe" function.

- Registered users can be invited to provide a peer review on manuscripts submitted to the Journal. In such cases, the users can decline the review invitation through a link available on the journal’s website.

- Each provided peer review can be registered with external services (such as Web of Science Reviewer Recognition Service, formerly Publons). The reviewer will be notified if such registration is going to occur and can decline the registration process.

- In case the Journal starts using personal data for purposes other than those specified in the Terms of Use, the Journal undertakes to immediately inform the person and request his/her consent.

- If the person does not give his/her consent to the processing of his or her personal data pursuant to the preceding paragraph, the Journal shall cease the processing of the personal data for the purposes for which there is no consent, unless there is another legal basis for the processing.

- Users can change/correct their personal data anytime via the functionality available in the User’s profile. Users can request the Journal to correct their personal data if the data is inaccurate or outdated and the Journal is obliged to correct the inaccurate or outdated personal data in a timely manner.

- Users may request the Journal to restrict the use of their personal data insofar as this limitation is not contrary to the law or the Terms of Use.

- Users may request their personal data to be deleted (the right to be forgotten) by the Journal, provided that the deletion does not conflict with the law or the Terms of Use.

- The User has the right to be informed:

- whether his or her personal data have been processed;

- for which purposes the Journal processes the personal data;

- the ways in which his/her personal data are processed;

- the types of personal data that Journal processes.

- The user undertakes not to interfere with and impede the Journal’s activities in the exercise of the provided rights.

- In case of non-fulfillment under the previous paragraph, the Journal reserves the right to delete the user's profile.

Disclaimer of Warranty and Limitation of Liability

Neither Pensoft and its affiliates nor any of their respective employees, agents, third party content providers or licensors warrant that the Journal service will be uninterrupted or error-free; nor do they give any warranty as to the results that may be obtained from use of the journal, or as to the accuracy or reliability of any information, service or merchandise provided through Journal.

Legal, medical, and health-related information located, identified or obtained through the use of the Service, is provided for informational purposes only and is not a substitute for qualified advice from a professional.

In no event will the Provider, or any person or entity involved in creating, producing or distributing Journal or the contents included therein, be liable in contract, in tort (including for its own negligence) or under any other legal theory (including strict liability) for any damages, including, but without limitation to, direct, indirect, incidental, special, punitive, consequential or similar damages, including, but without limitation to, lost profits or revenues, loss of use or similar economic loss, arising from the use of or inability to use the journal platform. The User hereby acknowledges that the provisions of this section will apply to all use of the content on Journal. Applicable law may not allow the limitation or exclusion of liability or incidental or consequential damages, so the above limitation or exclusion may not apply to the User. In no event will Pensoft’s total liability to the User for all damages, losses or causes of action, whether in contract, tort (including own negligence) or under any other legal theory (including strict liability), exceed the amount paid by the User, if any, for accessing Journal.

Third Party Content

The Provider is solely a distributor (and not a publisher) of SOME of the content supplied by third parties and Users of the Journal. Any opinions, advice, statements, services, offers, or other information or content expressed or made available by third parties, including information providers and Users, are those of the respective author(s) or distributor(s) and not of the Provider.

Cookies Policy

Cookies

a) Session cookies

We use cookies on our website. Cookies are small text files or other storage technologies stored on your computer by your browser. These cookies process certain specific information about you, such as your browser, location data, or IP address.

This processing makes our website more user-friendly, efficient, and secure, allowing us, for example, to allow the "Remember me" function.

The legal basis for such processing is Art. 6 Para. 1 lit. b) GDPR, insofar as these cookies are used to collect data to initiate or process contractual relationships.

If the processing does not serve to initiate or process a contract, our legitimate interest lies in improving the functionality of our website. The legal basis is then Art. 6 Para. 1 lit. f) GDPR.

When you close your browser, these session cookies are deleted.

b) Disabling cookies

You can refuse the use of cookies by changing the settings on your browser. Likewise, you can use the browser to delete cookies that have already been stored. However, the steps and measures required vary, depending on the browser you use. If you have any questions, please use the help function or consult the documentation for your browser or contact its maker for support. Browser settings cannot prevent so-called flash cookies from being set. Instead, you will need to change the setting of your Flash player. The steps and measures required for this also depend on the Flash player you are using. If you have any questions, please use the help function or consult the documentation for your Flash player or contact its maker for support.

If you prevent or restrict the installation of cookies, not all of the functions on our site may be fully usable.

Author Guidelines

BDJ uses the highly automated and user-friendly ARPHA Writing Tool (AWT), which navigates you during the authoring and submission process. A Tips & Tricks practical guide to the technicalities within the tool is also available.

The submission process in BDJ starts with creating a manuscript in AWT via the Start new manuscript button. AWT offers a number of fixed yet flexible article template for each article type available in BDJ. Please note that the article templates cannot be changed once the writing process has started.

Select Article Type

Biodiversity Data Journal considers for publication the following categories of papers:

- Data Paper

Formal paper describing large datasets. The article should contain a link to the openly accessible dataset deposited in an internationally recognised repository (see instructions and list of recommended repositories in the Data Publishing Guidelines).

- Omics Data Paper

Description of omics data that can include information about the genome ((meta)genomics), transcription products ((meta)transcriptomics), protein products ((meta)proteomics), and metabolic products ((meta)metabolomics). More information can be found here.

- Methods

Description of novel methodologies and methods used in biodiversity research and biodiversity informatics.

- R Package

Description of an R Package including information on its purpose, installation and usage.

- Software Description

Description of software or an online tool or platform that contains a link to the openly accessible code of the described tool.

- Interactive Key

Online interactive identification key of a given higher taxon or а species group that contains a link to the openly accessible online key.

- Research Article

Publication of a study presenting significant new and original information, other than taxonomic, nomenclatural, faunistic or floristic data. For the latter, please use the "Taxonomy & Inventories" template.

- Taxonomy & Inventories

This manuscript template is designed to encompass various research topics, related to taxonomy, nomenclature, faunistic or floristic explorations and inventories, for example: revision of a group of species or a higher taxon (genus, tribe, family, etc.) comprising nomenclatural and taxonomic novelties, description of new taxa, regional or global species checklists and others. Please note that manuscripts containing such information should use this instead of a Research Article template!

- Species Conservation Profile

A single or multiple IUCN species assessment report(s) imported and edited in an IUCN-compliant species template.

- Alien Species Profile

Assessment report of alien or invasive species following an IUCN-compliant species template. After publication, the article can be integrated with the Global Invasive Species Database (GISD).

- Editorial

Opinion article written by the senior editorial staff.

- Short Communication

Short articles (usually 3-4 pages) addressing preliminary findings of higher interest, unique approaches, controversial opinions, new methods or innovative technologies etc. that demand fast publication.

- Commentary

Short free-text publication meant to draw attention or criticise a previously published work. It does not include original data.

- Forum Paper

Publication meant to initiate or respond to participate in a discussion on a particular scientific topic.

Manuscripts will be accepted for publication only if the following criteria are fulfilled:

- Papers and associated data must contribute to a better understanding of the topic under scrutiny. Studies that have already been published or submissions that are currently under consideration for publication elsewhere will not be accepted for publication.

- Previously published information should be considered and cited in compliance with the good academic practice. References should be complete and accurate. All figures included in manuscripts should be copyright free and duly acknowledge the original source.

- All data underpinning an article, including data tables on which graphs are produced, must be published alongside the paper, e.g. as supplementary files, or links to external repositories where data are deposited, and contain sufficient metadata to facilitate data discovery.

- Manuscripts must be submitted in English - either British/Commonwealth or American English. Authors should confirm the English language quality of their texts or alternatively request thorough linguistic editing prior to peer-review at a price. Manuscripts written in poor English are a subject of rejection prior to peer-review.

- Manuscripts should be concisely written, in a good academic style, and follow a logical sequence. The voice - active or passive - and the tense used should be consistent throughout the manuscript. Results should be clearly and concisely described and supported by the data published with the article, or data published elsewhere but linked to the article.

Materials and Methods

In line with responsible and reproducible research, as well as FAIR data principles, we highly recommend that authors describe in detail and deposit their science methods and laboratory protocols in the open access repository protocols.io.

Once deposited on protocols.io, protocols and methods will be issued a unique digital object identifier (DOI), which could be then used to link a manuscript to the relevant deposited protocol. By doing this, authors could allow for editors and peers to access the protocol when reviewing the submission to significantly expedite the process.

Furthermore, an author could open up his/her protocol to the public at the click of a button as soon as their article is published.

Stepwise instructions:

1. Prepare a detailed protocol via protocols.io.

2. Click Get DOI to assign a persistent identifier to your protocol.

3. Add the DOI link to the Methods section of your manuscript prior to submitting it for peer review.

4. Click Publish to make your protocol openly accessible as soon as your article is published (optional).

5. Update your protocols anytime.

Criteria for Publication

- Originality: Papers and associated data should be sufficiently novel and contribute to a better understanding of the topic under scrutiny. Please consider the examples below to judge if your manuscript might be suitable for BDJ:

- Example 1: Single species occurrence records (e.g., new country or province records) ARE NOT encouraged for submission in BDJ and will most probably be rejected, unless they contain detailed studies and new information on other aspects of species' morphology, genomics, biology, ecology, distribution, etc. (see also Examples 2 and 3 below).

- Example 2: A single species observation must be SIGNIFICANT, either because the species is important in some regard (e.g., medically, or by being an invasive species, or by being endangered, or by being important in biocontrol or biosecurity, etc.), or it expands the species range considerably and represent unexpected discovery of biogeographical or other significance. An additional argument for acceptability of a manuscript could be the presence of images and multimedia (acccompanied by any other new ecological/ethological data), if a species had never been illustrated, or filmed, or recorded for songs. Single species observations are considered novel, if they bring new information based on author(s) personal investigations and do not just repeat or compile already published information.

- Example 3: Multiple species occurrence records are welcome, however occurrence data are considered novel, if they significantly extend the ranges (geographical, temporal or habitat type), or list new country/province records of several species, or concern taxa of high natural or social importance, or feature taxa that are data-poor; occurrence data will NOT be considered novel and suitable for publication, if they only list new localities of a well-known and common (data rich) taxon within a well-studied region.

- Example 4: A local checklist is considered novel, if it includes new data from a locality; a local checklist is NOT considered novel if it is mostly confirmatory and repetitive and lists common species from a locality in a well-studied region.

- Example 5: Stand-alone publications of whole genome or complete mitochondrial genome sequencing are acceptable only if they are part of a complete phylogenetic study and have a significant contribution to the systematics of the taxon.

- Example 6: A data set described in a data paper is considered sufficiently novel if it includes new and mostly unpublished data on a subject, or is a compilation of data on a subject from various appropriately credited, published and/or unpublished sources, or represents new transformations or interpretations of data, including significant data corrections. If the data set is a compilation of data from different sources, all data contributors should be appropriately credited, e.g. through co-authorship or citation, while respecting the use licenses associated with the original data sets. If the data paper describes a dynamic data set (the content of which is expected to change) it is strongly recommended to include links and identifiers in the Data Resources section of the paper to both the dynamic data set and to a data dump deposited in an international archive (e.g. Zenodo). The data dump should reflect the dynamic data as it existed at the time of manuscript submission.

- Data are published: All data underpinning an article, including data tables on which graphs are produced, must be published alongside the paper, e.g. as supplementary files, or links to external repositories where data are deposited, and contain sufficient metadata to facilitate data discovery.

-

Structure: Manuscripts should be concisely written, in a good academic style, and follow a logical sequence. Results should be clearly and concisely described and supported by the data published with the article, or data published elsewhere but linked to the article.

-

Previous research: Previously published information should be considered and cited in compliance with the good academic practice. References should be complete and accurate, where possible including DOIs or links to the article.

Prepare Your Manuscript

Manuscripts for the Biodiversity Data Journal can only be submitted from the online, collaborative, article-authoring ARPHA Writing Tool (AWT) that provides a large set of pre-defined, but flexible, article templates.

To facilitate the writing process, the AWT also provides an automated search and import function from external databases including: electronic registries; catalogues; occurrence data in Darwin Core format; and reference bibliographies.

In the AWT-environment, the authors may invite external contributors, such as mentors, potential reviewers, linguistic and copy editors, colleagues, etc., who are not authors, but may watch and comment on the text during the preparation of the manuscript.

Please consider the following steps, illustrated at the figure below:

- Start a manuscript in the ARPHA Writing Tool (AWT)

- Validate and submit it when ready to the Biodiversity Data Journal

- Make corrections and respond to reviewers and editors completely online

- Submit the revised version at the click of a button

- Work with our copyeditors on final improvements

- Enjoy publication within 3 days after final acceptance

For more information, you may also look at the Editorial Beyond dead trees: integrating the scientific process in the Biodiversity Data Journal and press release The Biodiversity Data Journal: Readable by humans and machines.

Author Contributions

The journal is integrated with Contributor Role Taxonomy (CRediT), in order to recognise individual author input within a publication, thereby ensuring professional and ethical conduct, while avoiding authorship disputes, gift / ghost authorship and similar pressing issues in academic publishing.

During manuscript submission, the submitting author is strongly recommended to select a contributor role for each of co-author, using a list of 14 predefined roles, i.e. Conceptualization, Methodology, Software, Validation, Formal analysis, Investigation, Resources, Data Curation, Writing - Original draft, Writing - Review and Editing, Visualization, Supervision, Project administration, Funding Acquisition (see more). Once published, the article will be including the contributor role for all authors in the article metadata.

Authorship of AI

Preprints

The journal is integrated with the ARPHA Preprints platform, thereby allowing authors to post their pre-review manuscript as a preprint by simply checking the relevant box while completing the submission of their manuscript.

Due to the integration, the authors are not required to re-format or submit any additional files, as the system uses the manuscript to automatically generate a preprint. Subject to a basic editorial screening, the preprint will be posted on ARPHA Preprints within a few days after the manuscript’s submission.

When submitting their manuscripts and requesting a preprint publication authors must keep in mind that preprints are preliminary versions of works accessible electronically in advance of publication of the final version. They are not issued for the purposes of botanical, mycological or zoological nomenclature and are not effectively/validly published in the meaning of the Codes. Therefore, papers containing or dealing with nomenclatural novelties (new names) or other nomenclatural acts (designations of type, choices of priority between names, choices between orthographic variants, or choices of gender of names) will NOT be posted as preprints.

When requesting a preprint publication authors agree that a withdrawal of the published and indexed preprint is only possible if there is a substantial reason for that, i.e. major scientific errors, data fabrication, plagiarism or law infringement. The rejection of the manuscript by the journal is not a valid reason for withdrawal of the preprint. Find out more in this FAQ-section.

Explore the Benefits of posting a preprint or visit ARPHA’s blog to learn more about ARPHA Preprints.

Find more about how to submit your preprint in the ARPHA Manual.

Peer Review

Text and data submitted to this journal will be formally peer reviewed and evaluated for technical soundness and the correct presentation of appropriate and sufficient metadata. All manuscripts undergo a pre-submission technical evaluation in the ARPHA Writing Tool (AWT) environment. The scientific quality and importance of the paper and data will be further judged by the scientific community, through a novel, community-based pre-publication and post-publication peer review.

Reviewers may opt to be anonymous or to disclose their names. The deadlines for the peer review and editorial processes are strict and limited to a maximum of two months after submission.

The peer review process and deadlines described below are articulated on the assumption that the contributions are technically well-prepared and concisely written so that the peer-review is easy, straightforward and not requiring much time from the reviewer.

What is "community peer review"?

It is evident that the peer-review system is increasingly under strain. Our response to this situation is to decrease the load on each individual reviewer without in any way compromising the quality of the final product. The purpose of community peer review is to distribute effort, increase transparency, engage the broader community of experts, and enhance the quality of the science we publish.

Stepwise description of the peer review and editorial process

1. Upon submission, the manuscript is assigned to the Subject Editor responsible for the topic by the in-house Assistant Editor. The Subject Editor is alerted by email.

2. The Subject Editor reads the manuscript and decides if it complies with the journal's scope and should be processed for peer review.

3. The Subject Editor sends review requests to two or three "nominated" reviewers and several other "panel" reviewers.

Note-1: How editors invite reviewers? The journal's database will provide a list of potential reviewers and, if necessary, the editor can add additional names to the list. Review requests will be emailed by a ‘single-click’ button.

Note-2: "Nominated" and "Panel" reviewers. The difference between "Nominated" and "Panel" reviewers is that "Nominated" reviewers are expected to provide a formal review by the deadline; "Panel" reviewers are invited but not required to evaluate the manuscript. Both "Nominated" and "Panel" reviewers can propose changes and corrections, and make comments in the manuscript online and submit a concise reviewer's form.

Note-3: "Community" and "public" peer review. "Community" peer review means that during the review process the manuscript is visible only to the editor, the reviewers and the authors. We are planning to introduce soon an entirely public review process where authors may opt to make their manuscript available for comment by all registered journal users. Reviewers may opt to stay anonymous or disclose their names in either case.

4. The Subject Editor receives a notification email if the nominated reviewer agrees or declines to review the manuscript. In the latter case the editor can appoint alternative reviewers.

5. Reviews are expected within 10 days and can be extended on demand. The Subject Editor will then decide to accept, reject, or request revision of the manuscripts.

Note-4: Provision of reviews. Reviewers will be prompted by automated email notification sent one day before the deadline. In case of delay, the review request can be cancelled automatically, unless an extension has been requested.

6. The authors must provide a revised version of their manuscript within one week, but can ask for an extension, if needed.

7. After submission of the revised version, the Subject Editor compares it against the reviews through an easy-to-use online tool and decides to accept or reject the manuscript. The authors may be asked to make additional revisions, OR in case of substantial changes, the reviewing procedure will be started again.

8. The manuscript will be formatted, proof-read, copy-edited and published within two weeks after acceptance.

Guidelines for reviewers and editors

Reviewers and editors are expected to evaluate the completeness and quality of the manuscript text, related dataset(s), models, workflows or software and their description (metadata), as well as the publication value of data, models, software or workflows. This may include the appropriateness and validity of the methods used, compliance with applicable standards during collection, management and curation of data, and compliance with appropriate metadata standards in the description of the data resources.

The following aspects of evaluation will be considered:

- Originality

- Is the paper sufficiently novel and does it contribute to a better understanding of the topic under scrutiny? Is the work rather confirmatory and repetitive?

- Previous research

- Is previous research adequately incorporated into the paper?

- Are references complete, necessary and accurate?

- Is there any sign that substantial parts of the paper are copied from other works?

- Quality of the manuscript

- Do the title, abstract and keywords accurately reflect the contents and data?

- Is the manuscript consistent, suitably organised and written in grammatically correct English?

- Are the relevant non-textual data and media (data sets, audio and video files, models, software) also available as supplementary files to the manuscript or as links to external repositories?

- Have abbreviations and symbols been properly defined?

- Are conflicts of interest, relevant permissions and other ethical issues addressed in an appropriate manner?

- Quality of the data

- Are the data completely and consistently recorded within the dataset(s)?

- Does the data resource cover scientifically important and sufficiently large region(s), time period(s) and/or group(s) of taxa to be worthy of publication?

- Are the data consistent internally and described using applicable standards (e.g. in terms of file formats, file names, file size, units and metadata)?

- Are the methods used to process and analyses the raw data, thereby creating processed data or analytical results, sufficiently well documented that they could be repeated by third parties?

- Are the data correct, given the protocols? Authors are encouraged to report any tests undertaken to address this point.

- Is the repository to which the data are submitted appropriate for the nature of the data?

- Consistency between manuscript and data

- Does the manuscript provide an accurate description of the data?

- Does the manuscript properly describe how to access the data?

- Are the methods used to generate the data (including calibration, code and suitable controls) described in sufficient detail?

- Is the dataset sufficiently novel to merit publication?

- Does the manuscript put the data resources being described properly into the context of prior research, citing pertinent articles and datasets?

- Have possible sources of error been appropriately addressed in the protocols and/ or the paper?

- Is anything missing in the manuscript or the data resource itself that would prevent replication of the measurements, or reproduction of the figures or other representations of the data?

- Are all claims made in the manuscript substantiated by the underlying data?

Pensoft journals support the open science approach in the peer-review and publication process. We encourage our reviewers to open their identity to the authors and consider supporting the peer-review oaths, which tend to be short declarations that reviewers make at the start of their written comments, typically dictating the terms by which they will conduct their reviews (see Aleksic et al. 2015, doi: 10.12688/f1000research.5686.2 for more details):

Principles of the open peer-review oath

- Principle 1: I will sign my name to my review

- Principle 2: I will review with integrity

- Principle 3: I will treat the review as a discourse with you; in particular, I will provide constructive criticism

- Principle 4: I will be an ambassador for the practice of open science

Post-acceptance Procedure

Frequently Asked Questions (FAQ)

1. Does Biodiversity Data Journal publish only data?

NO! The journal focuses on data, but one can publish analyses and discussions, within the article, as in any other journal.

2. Does Biodiversity Data Journal require all data underlying an article to be published as well?

YES! All small data sets that underpin an article should be imported in the text (e.g., Darwin Core occurrence data, checklists, data tables, literature references) or uploaded as supplemnetary files (e.g., a data table used to create a graph). Large and complex data sets should be deposited in an internationally recognized repository (see Data publication section for details).

3. What kind of data does Biodiversity Data Journal publish?

Any kind of data related to biodiversity, for example: species occurrence data, local or regional checklists, inventories, genomic data, morphological descriptions, ecological observations, environmental data, etc.

4. What is the minimum "publishable" manuscript that can be submitted to the journal?

Any manuscript that brings novel information on any organism from any part of the world. Manuscripts are expected to demonstrate novelty, so it is unlikely that, for instance, a single observation would be sufficient. Please carefully consider our Criteria for publication before you decide to submit a manuscript to BDJ.

5. Why do you define fixed templates for articles?

Templates include some mandatory elements, but they are not fixed. Authors can additional sections or subsections in a manuscript. Using the templates is necessary because the journal's online peer-review and editorial system are designed to automate parts of the publication process to deliver both fast turnover and low cost. These systems do not accept manuscripts written in text processors (e.g., MS Word, or ODT) because they cannot be automated in this way.

6. What? Does Biodiversity Data Journal really NOT accept manuscripts written in MS Word?

NO, it does not! To keep the costs low and affordable for all, manuscripts submitted to the journal must either be written either within a specially designed tool (Pensoft Writing Tool, or PWT) or submitted from integrated external platforms, such as Scratchpads or GBIF Integrated Publishing Toolkit (IPT).

7. What does mean "public", and "community" peer-review?

"Community" peer review means that during the peer-review process the manuscript is visible only to editor, the reviewers and the authors; this is the traditional method in academic publishing and is the default option. Authors may opt, however, to make their manuscript available for comments from all registered journal users ("public" peer review). Reviewers may opt to stay anonymous or disclose their names in either case.

For more information, you may also look at the Editorial Beyond dead trees: integrating the scientific process in the Biodiversity Data Journal and press release The Biodiversity Data Journal: Readable by humans and machines.

8. What is a "Data Paper"?

A "Data Paper" is a scholarly journal publication whose primary purpose is to describe a dataset or a group of datasets, rather than to report a research investigation. As such, it contains facts about data, not hypotheses and arguments in support of the data, as found in a conventional research article. Its purposes are three-fold:

• to provide a citable journal publication that brings scholarly credit to data publishers;

• to describe the data in a structured human-readable form;

• to bring the existence of the data to the attention of the scholarly community.

If you are interested to learn more about it, you may have a look at our Data Publishing page, or the Data Paper Poster.

Data Publishing Guidelines

By submitting to this journal, authors agree to make the data that underpin or are described in their articles publicly available. Authors must include a separate "Data resources" section in their articles, listing datasets and where they are deposited (including accession numbers, DOIs or other persistent URL identifiers). For more information, please see our detailed Strategies and guidelines for scholarly publishing of biodiversity data.

Please remember that publication of data associated with your article in machine-readable form (databases, data sets, data tables) in this journal is mandatory!

The journal will publish papers that strictly adhere to theadhere the rules of the last edition of the International Code of Nomenclature for algae, fungi, and plants. To assure this, authors are requested to follow the instructions below:

Authors of novel fungal taxa must register the new names in only one registry, e.g. either in MycoBank or Index Fungorum but not in both! Please note that the registries regularly coordinate sharing of data and have arranged an informal agreement to only accept the first listed number of any name. Registration of the same new name in multiple registries is considered an inappropriate practice, which creates a considerable confusion and extra work to the registries and necessitates the depreciation of the additional registrations at a later stage!

We provide various modes of data publishing:

- Import of data files in the text (e.g., Darwin Core occurrence data, checklists, specimen and biotic intercations data tables, literature references).

- Supplementary data files (up to 20 MB each) that support graphs, hypotheses, results, etc. published with the article.